AI researchers want to solve the bot problem by requiring ID to use the internet

The researchers based their ideas on “proof of personhood” technologies developed by the blockchain community.

Artificial intelligence researchers are worried that AI bots are eventually going to take over the internet and spread like a digital invasive species. Rather than approach the problem by attempting to limit the proliferation of bots and AI-generated content, one team decided to go in the opposite direction.

In a recently published preprint paper, dozens of researchers advocate for a system by which humans would need to have their humanity verified in-person by another human in order to obtain “personhood credentials”

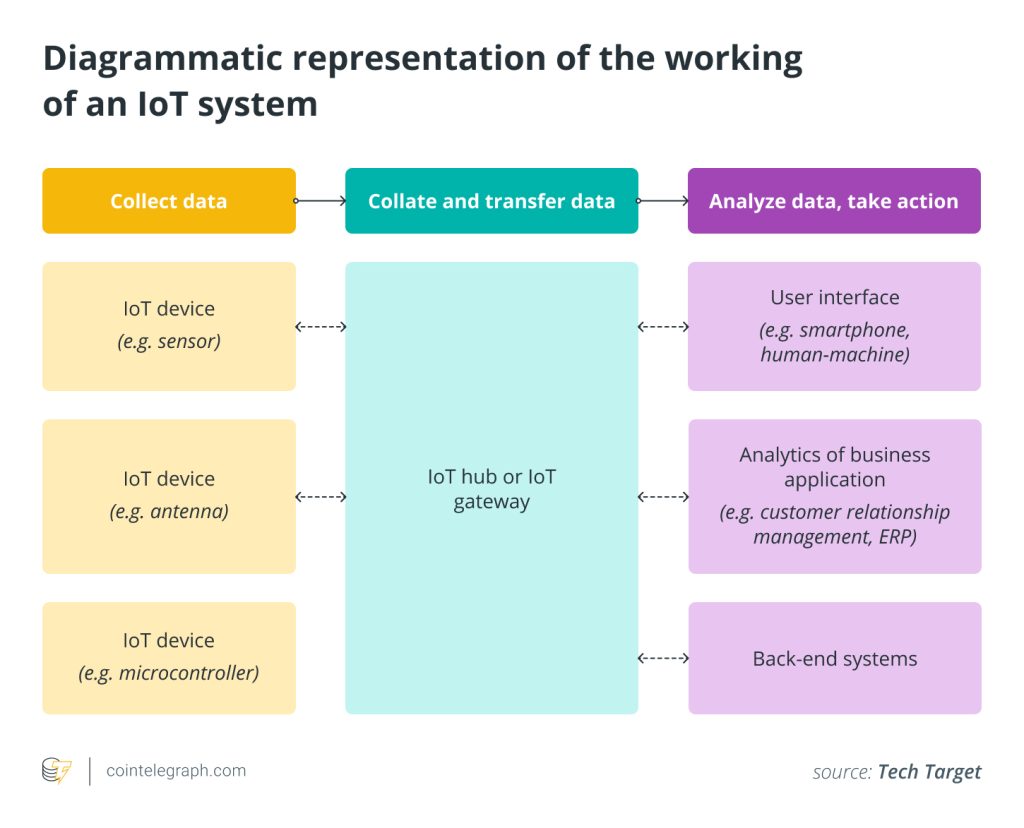

The big idea appears to be the creation of a system wherein someone could prove they were human without having to disclose their identity or any further information. If that sounds familiar to those of you in the crypto community, it’s because the research is based on “proof of personhood” blockchain technologies.

Digital verification

Services such as Netflix or Xbox Game Pass that require a fee to use typically rely on your financial institution to perform verification services. This doesn’t allow for anonymity but, for most people, this is fine. It’s typically considered part of the cost of doing business.

Other services, such as anonymous forums, that can’t rely on your payments as proof you’re either human or, at the very least, a non-human customer in good standing, have to take steps to limit bots and duplicate accounts.

As of August 2024, for example, ChatGPT’s guardrails would likely prevent it from being exploited to sign up for multiple free Reddit accounts. Some AI can surpass “CAPTCHA” style humanity checkers, but it would take a robust effort to get one to be able to follow the steps associated with verifying an email address and continuing the setup up process to open an account.

However, the main argument posed by the team, which included a litany of experts from companies such as OpenAI, Microsoft, and a16z crypto, as well as academic institutions including Harvard Society of Fellows, Oxford, and MIT, was that the current limitations in place were only going to hold for so long.

In a matter of years, perhaps, we could be faced with the reality that, without being able to look someone in the eye, face to face, there would be no way to determine whether we’re engaging with a person or not.

Pseudo Anonymity

The researchers are advocating for the development of a system that would designate certain organizations or facilities as issuers. These issuers would employ humans for the purpose of confirming an individual’s humanity. Once verified, the issuer would certify the individual’s credentials. Presumably, the issuer would be limited from tracking how those credentials were used. It’s unclear how a system could be made robust against cyberattack and the imminent threat of quantum-assisted decryption.

At the other end, organizations interested in providing services to verified humans could choose to only issue accounts to humans holding credentials. Ostensibly, this would limit everyone to one account per a person and make it impossible for bots to gain access to these services.

According to the paper, it’s beyond the scope of the research to determine which method of centralized pseudo-anonymity would be the most effective, nor does the research address the myriad potential problems raised by such a scheme. However, the team does acknowledge these challenges and has put forth a call to action for further study.

Related: US Financial Services Committee leaders want ‘regulatory sandboxes’ for AI

Responses