Four things Google Gemini users will be able to do soon

Google boss Sundar Pichai unveiled that its AI model Gemini is getting put into a slew of the company’s products and services, including its flagship Search product.

Google’s artificial intelligence model Gemini is getting weaved into much of the tech giant’s technology, with the AI soon to show up in Gmail, on YouTube, and on the company’s smartphones.

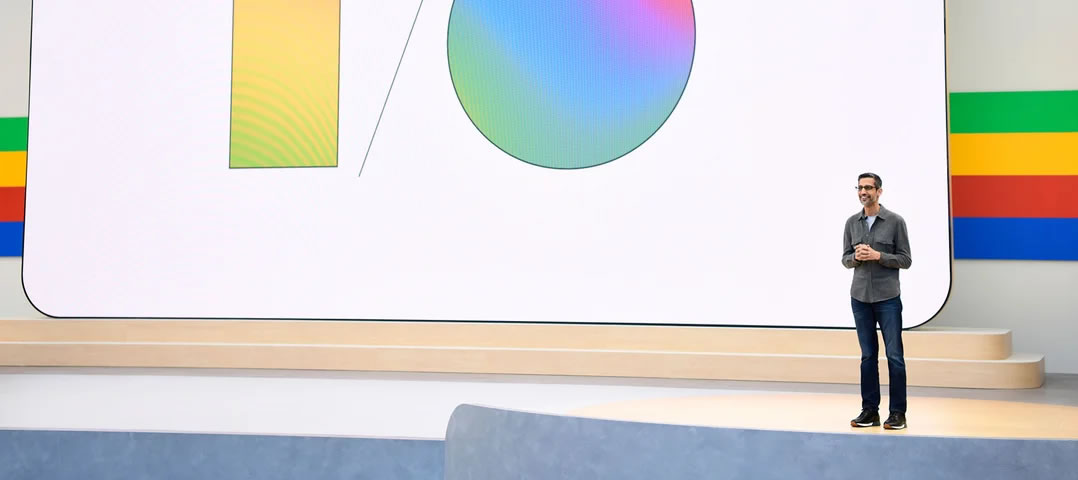

In a keynote speech at the company’s I/O 2024 developer conference on May 14, CEO Sundar Pichai revealed some of the upcoming places its AI model will appear.

Pichai mentioned AI 121 times in his 110-minute keynote as the topic took center stage — Gemini, which launched in December, took the limelight.

Google is incorporating the large language model (LLM) into virtually all of its offerings, including Android, Search, and Gmail, and here is what users can expect going forward.

App interactions

Gemini is getting more context in that it will be able to interact with applications. In an upcoming update, users will be able to call Gemini to interact with apps such as dragging and dropping an AI-generated image into a message.

YouTube users will also be able to tap “Ask this video” to find specific information from within the video from the AI.

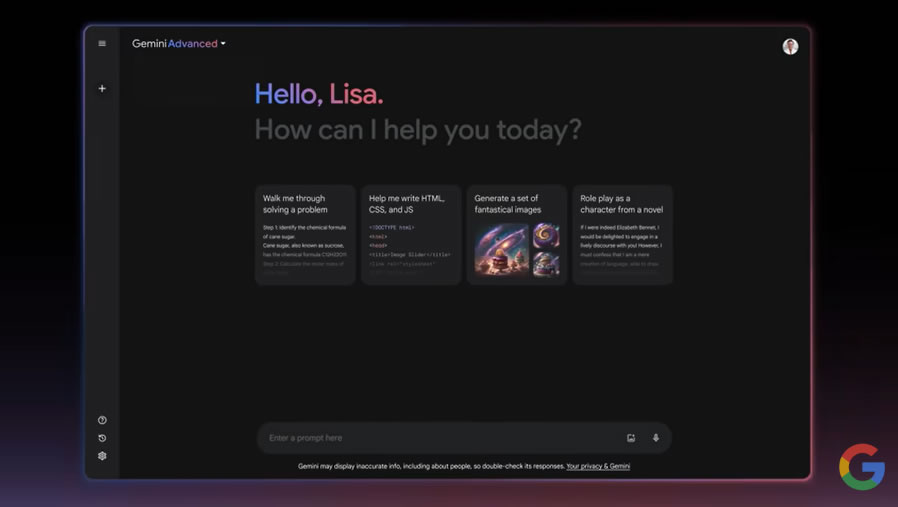

Gemini in Gmail

Google’s email platform, Gmail, is also getting AI integration as users will be able to search, summarize, and draft their emails using Gemini.

The AI assistant will be able to take action on emails for more complex tasks, such as assisting in processing e-commerce returns by searching the inbox, finding the receipt, and filling out online forms.

Gemini Live

Google also unveiled a new experience called Gemini Live where users can have “in-depth” voice chats with the AI on their smartphones.

The chatbot can be interrupted mid-answer for clarification and it will adapt to users’ speech patterns in real-time. Additionally, Gemini can also see and respond to physical surroundings via photos or videos captured on the device.

Multimodal advancements

Google is working on developing intelligent AI agents that can reason, plan, and complete complex multi-step tasks on the user’s behalf under supervision. Multimodal means that the AI can go beyond text and handle image, audio, and video inputs.

Examples and early use cases include automating shopping returns and exploring a new city.

Related: Google’s ‘GPT-4 killer’ Gemini is out, here’s how you can try it

Other updates in the pipeline for the firm’s AI model include a replacement for Google Assistant on Android with Gemini fully integrated into the mobile operating system.

A new “Ask Photos” feature allows searching the photo library using natural language queries powered by Gemini. It can understand context, recognize objects and people, and summarize photo memories in response to questions.

AI-generated summaries of places and areas will be shown in Google Maps utilizing insights from the platform’s mapping data.

Responses