Democratizing AI development: How consumer GPUs are breaking barriers to entry

The AI industry faces bottlenecks caused by the scarcity and high costs of enterprise-grade GPUs.

The AI industry grapples with the scarcity and high cost of GPUs needed for training complex models. DEKUBE introduces a network solution for distributed AI training to democratize AI development by leveraging consumer-grade GPUs.

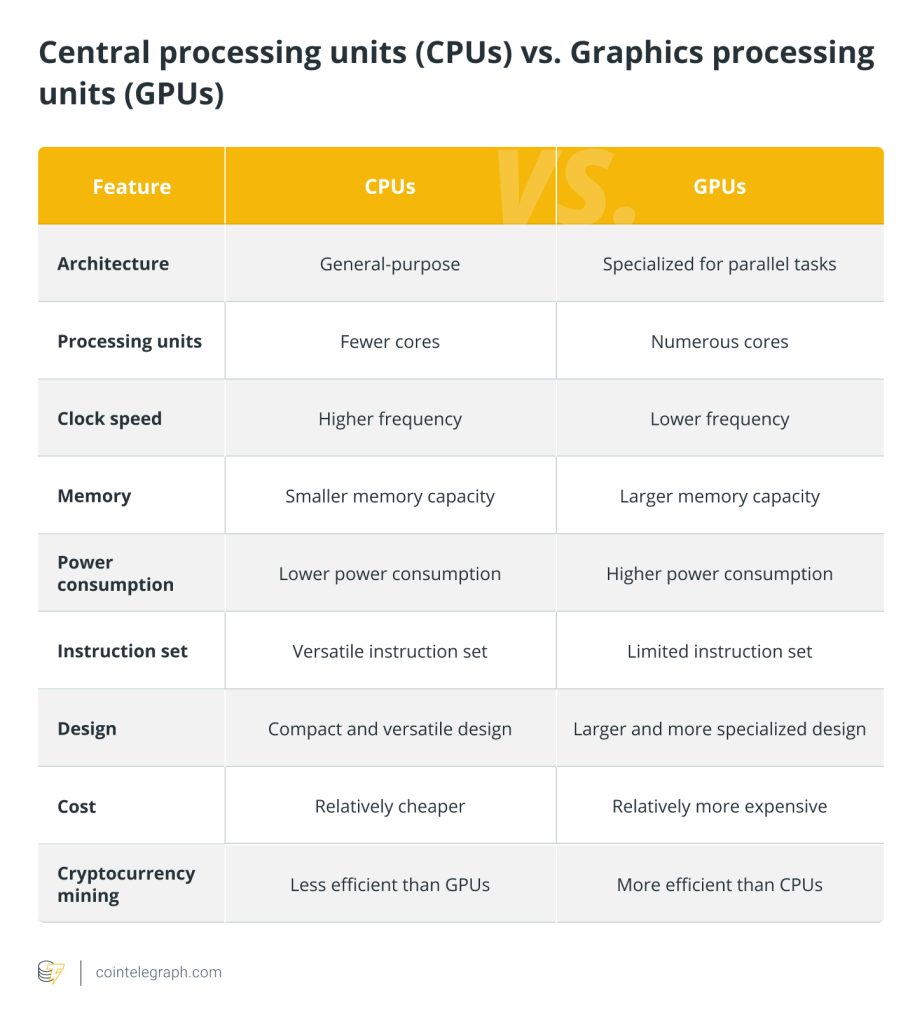

The artificial intelligence (AI) industry faces an exponential demand for computational power to train large language models (LLMs). The sector’s demand is confronting bottlenecks due to the scarcity and high cost of high-end graphics processing units (GPUs) like Nvidia’s H100 and A100.

In highlighting the critical importance and scarcity of high-end GPUs, an anecdote shared by Larry Ellison, founder and chairman of Oracle, underscores the situation vividly. At a dinner with Jensen Huang, CEO and founder of Nvidia — a GPU manufacturer with a $2 trillion valuation — Ellison recounted how he and Elon Musk found themselves in a position where they were “begging” for access to Nvidia’s enterprise-grade technology.

Some pics from when Jensen delivered the first @Nvidia AI system to @OpenAI pic.twitter.com/gj4995BKSn

— Elon Musk (@elonmusk) February 18, 2024

Compounded by technological and production constraints, the scarcity of high-end GPUs has resulted in a near monopoly by major corporations, stifling innovation and disadvantaging smaller entities and researchers.

Untapped computational power in end-user GPUs

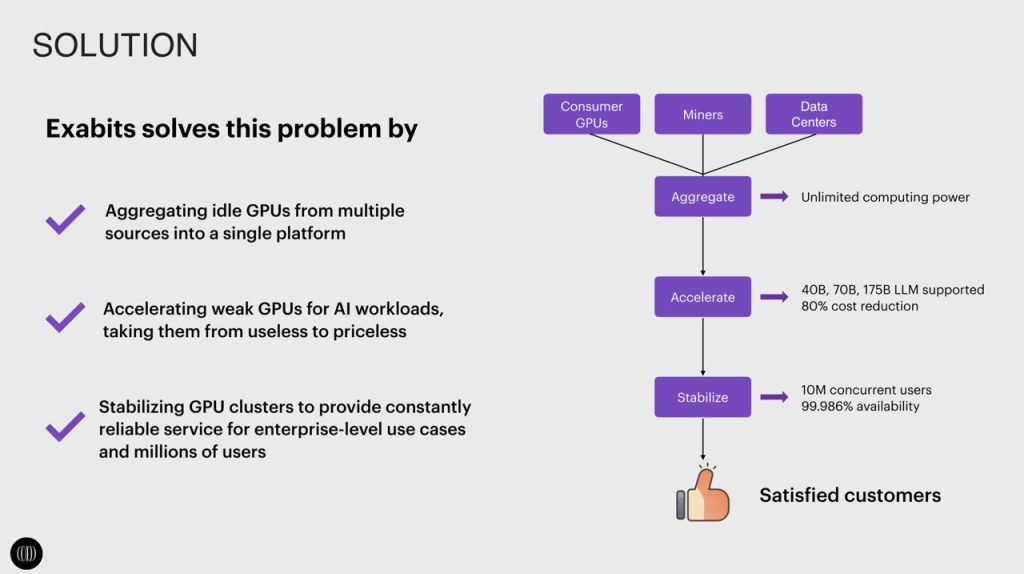

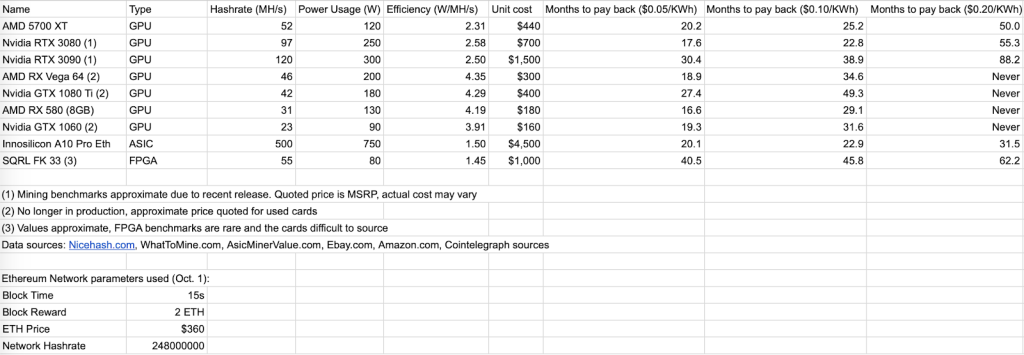

Yet, an untapped reservoir of computational power lies dormant within the vast number of consumer-grade and previously mining-dedicated GPUs, which have the potential to democratize AI development. Nonetheless, addressing consumer-grade GPUs’ limitations in memory, computing power and bandwidth is crucial, as these factors traditionally render them unsuitable for training extensive AI models.

DEKUBE aims to revolutionize AI infrastructure by creating one of the largest global networks for AI training, powered by consumer-grade GPUs. This innovative use of consumer GPUs unlocks distributed AI training at a scale, fostering a network that’s both flexible and cost-efficient. The platform’s approach democratizes AI development, bringing sophisticated LLMs like Llama2 70B and Grok 314B within reach. This capability distinguishes DEKUBE within the industry and marks a significant leap forward in making advanced AI accessible and achievable.

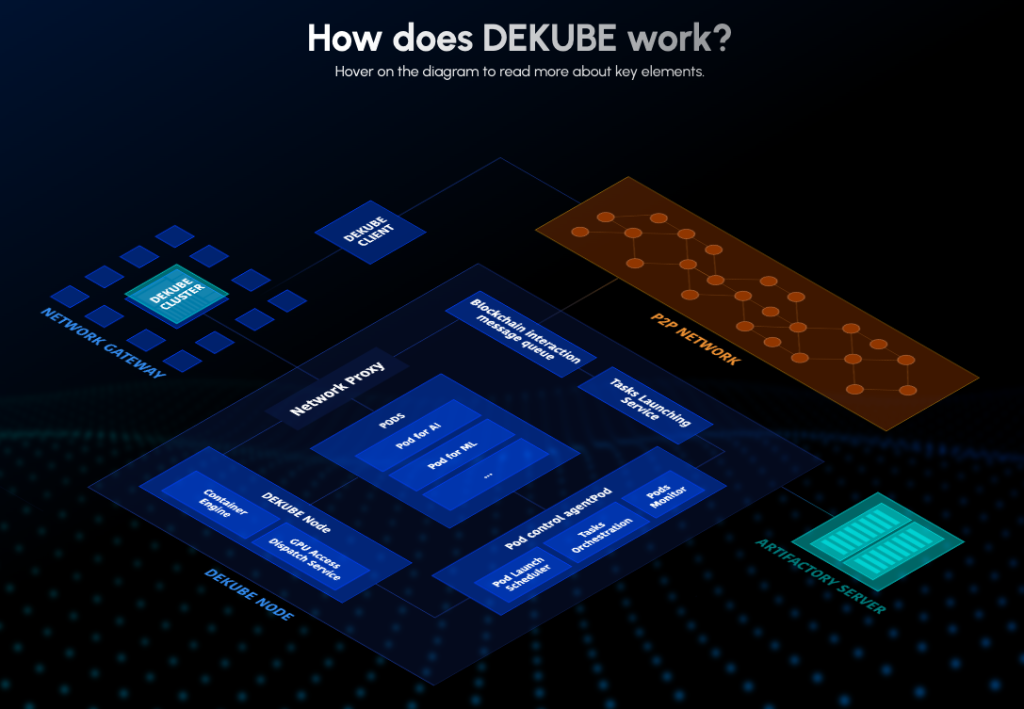

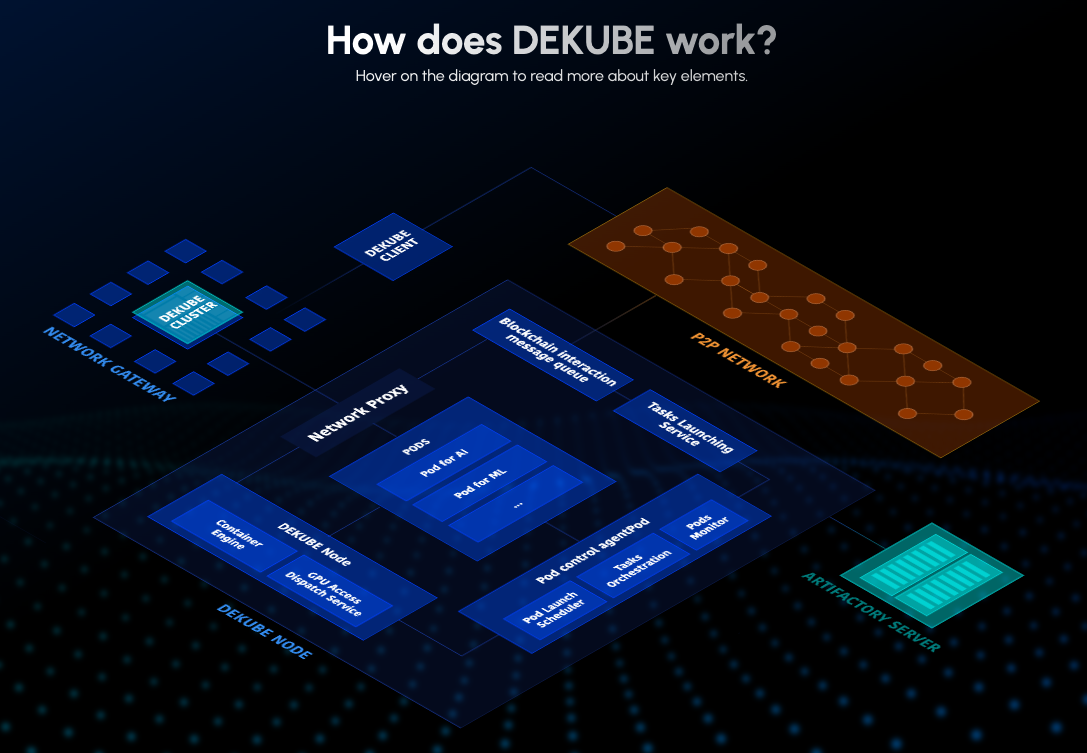

DEKUBE provides a comprehensive ecosystem for both providers and consumers. Source: DEKUBE

A highlight of DEKUBE’s initiative is the GPU mining event, where users can connect their GPUs to the network through a straightforward interface and earn DEKUBE points. These points can later be converted into tokens upon the launch of the mainnet, providing an incentive for community participation and a tangible reward for network contributors.

Overcoming efficiency bottlenecks in AI training

The company’s strategy addresses and overcomes the critical efficiency bottlenecks associated with data transmission and synchronization in cross-regional collaborative LLM training. DEKUBE’s approach allows for integrating consumer-level GPUs from household PCs into a network capable of enterprise-level AI computing power.

DEKUBE’s technology offers a comprehensive solution by optimizing various aspects of the process, including the network transmission layer, the LLMs, the data sets used and the training processes. The optimization enables the effective utilization of computational resources from ordinary consumer GPUs, addressing the acute shortage of computational power and the high costs associated with training large AI models.

DEKUBE has successfully deployed Llama2 70B on its distributed compute power network, leveraging a broad array of consumer-grade GPUs. The deployment illustrates the feasibility of utilizing more widely available hardware for training complex AI models, which could influence broader adoption and innovation in the field.

The planned deployment of Grok 314B, one of the largest open-source LLMs with 314 billion parameters, is expected to further test the limits of distributed computing in AI. By integrating Grok into its network, DEKUBE aims to enhance the scalability and accessibility of high-caliber AI technologies.

DEKUBE aims to democratize access to AI. Source: DEKUBE.AI

The platform has garnered industry-wide attention and support, with nearly a hundred technical experts dedicating over three years to its development. Notable AI entrepreneurs and global leading miners have contributed to the latest financing round, raising $20 to $30 million to build the most extensive distributed AI training infrastructure with more than 20,000 GPUs online in the second quarter of 2024.

With the testnet’s anticipated launch in May 2024, DEKUBE aims to significantly reduce computational power procurement costs and cycles for AI model developers, accelerating industry-wide technological progress.

DEKUBE will continue to provide computational resources support to open-source LLM developers and teams, invest in and incubate outstanding AI projects and become a bridge between them and Web3 in the future to promote innovation and development in the industry.

Easier access to high-end GPUs can pave the way for innovation, fostering greater accessibility and enabling a broader audience to contribute to advancements in the field of AI. By tapping into the potential of consumer-grade GPUs and overcoming the challenges of computational power scarcity and high costs, DEKUBE positions itself at the forefront of a transformative movement within the AI sector.

Responses