Groq AI's LPU: The breakthrough answer to ChatGPT's GPU woes?

Groq’s LPU chip emerges as a potential solution to the challenges faced by AI developers relying on GPUs, sparking comparisons with ChatGPT.

The latest artificial intelligence (AI) tool to capture the public’s attention is the Groq LPU Inference Engine, which became an overnight sensation on social media after its public benchmark tests went viral, outperforming the top models by other Big Tech companies.

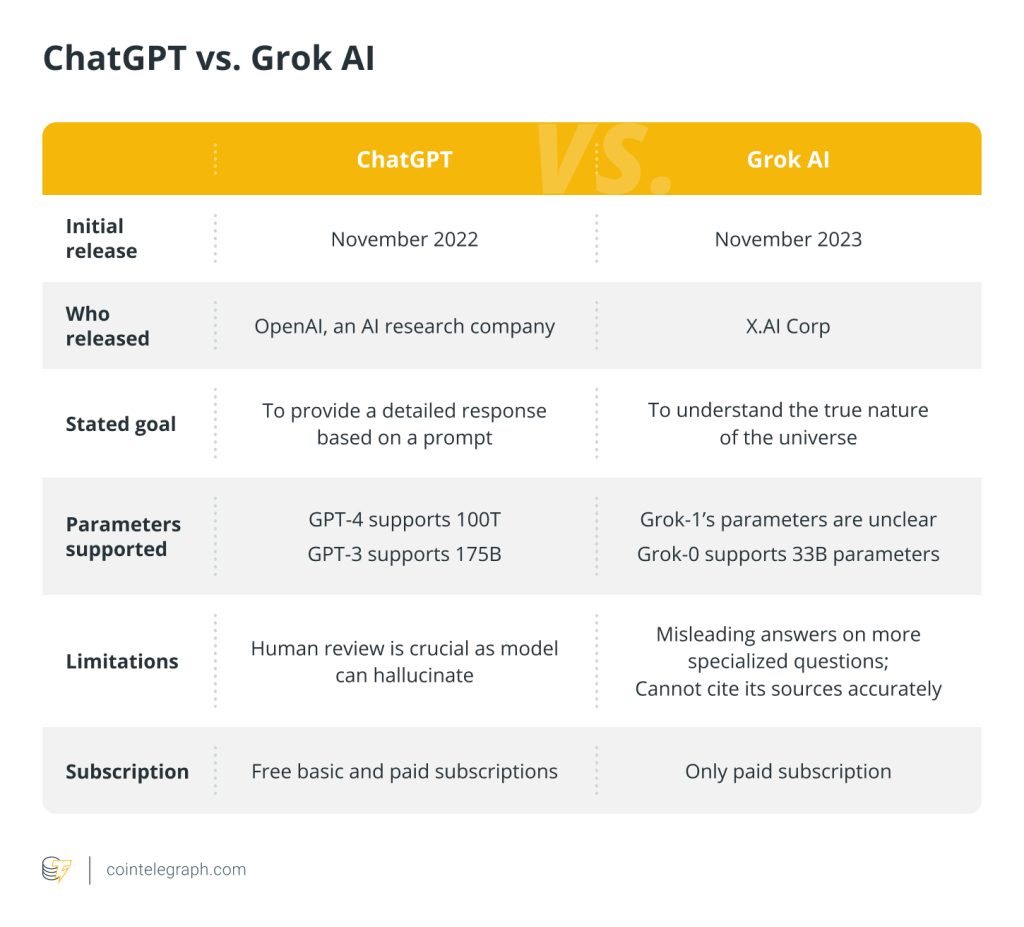

Groq, not to be confused with Elon Musk’s AI model called Grok, is, in fact, not a model itself but a chip system through which a model can run.

The team behind Groq developed its own “software-defined” AI chip which they called a language processing unit (LPU), developed for inference purposes. The LPU allows Groq to generate roughly 500 tokens per second.

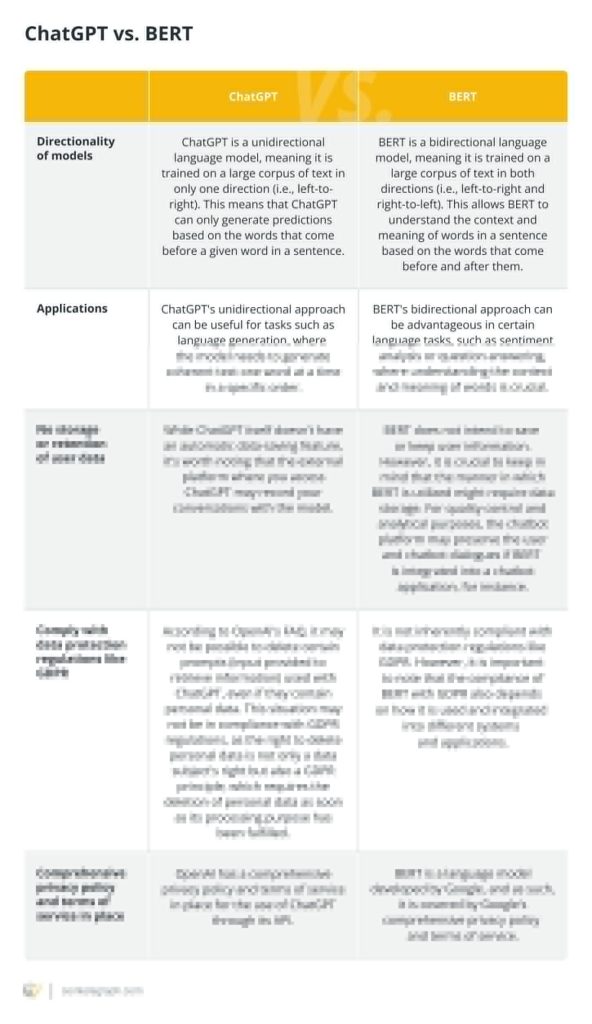

Comparatively, the publicly available AI model ChatGPT-3.5, which runs off of scarce and costly graphics processing units (GPUs), can generate around 40 tokens per second. Comparisons between Groq and other AI systems have been flooding the X platform.

Groq is a Radically Different kind of AI architecture

Among the new crop of AI chip startups, Groq stands out with a radically different approach centered around its compiler technology for optimizing a minimalist yet high-performance architecture. Groq's secret sauce is this… pic.twitter.com/Z70sihHNbx

— Carlos E. Perez (@IntuitMachine) February 20, 2024

Cointelegraph heard from Mark Heaps, the Chief Evangelist at Groq, to better understand the tool and how it can potentially transform how AI systems operate.

Heaps said that the founder of Groq, Jonathan Ross, initially wanted to create a system technology that would prevent AI from being “divided between the haves and have nots.”

At the time tensor processing units (TPUs) were only available to Google for their own systems, however, LPUs were born because:

“[Ross] and the team wanted anyone in the world to be able to access this level of compute for AI to find innovative new solutions for the world.”

The Groq executive explained that the LPU is a “software-first designed hardware solution,” by which the nature of the design simplifies the way data travels — not only over the chip but from chip to chip and throughout a network.

“Not needing schedulers, CUDA libraries, Kernels, and more improves not only performance but the Developer experience,” he said.

“Imagine commuting to work and every red light turned green right as you hit it because it knew when you’d be there. Or the fact is, you wouldn’t need traffic lights at all. That’s what it’s like when data travels through our LPU.”

Related: Microsoft to invest 3 billion euros into AI development in Germany

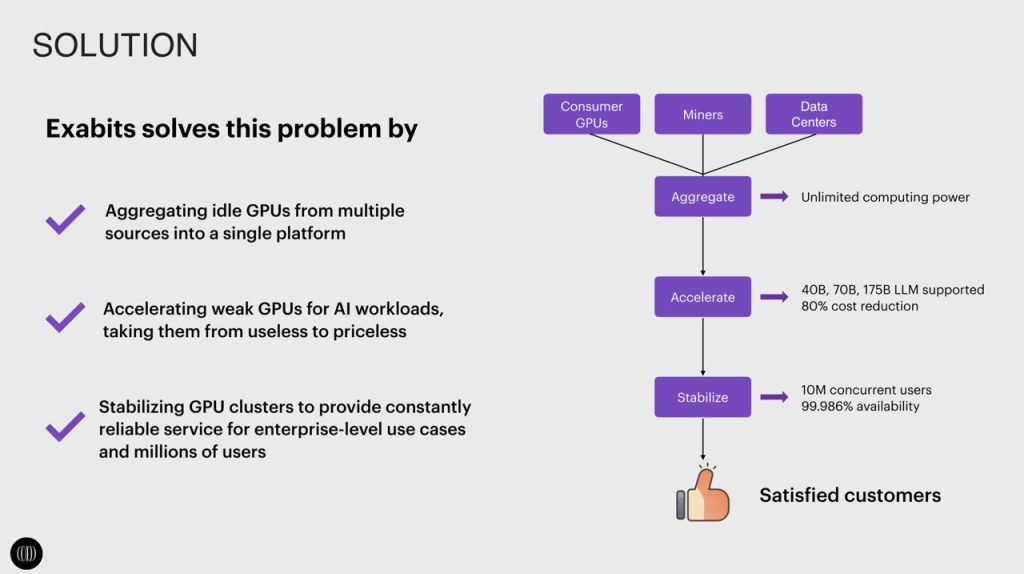

A current issue plaguing developers in the industry is the scarcity and cost of powerful GPUs — such as Nvidia’s A100 and H100 chips — needed to run AI models.

However, Heaps said they don’t have the same issues as their chip is made using 14nm silicon. “This size of die has been used for 10 years in chip design,” he said, “and is very affordable, and readily available. Our next chip will be 4nm and also made in the United States.”

He said GPU systems still have a place when talking about running smaller-scale hardware deployments. However, the choice of GPU vs. LPU comes down to multiple factors including the workload and model.

“If we’re talking about a large-scale system, serving thousands of users with high utilization of a large language model, our numbers show that [LPUs] are more efficient on power.”

LPU usage remains to be implemented by many of the major developers in the space. Heaps said several factors result in this, one of which being the relatively new “explosion of LLMs” over the last year.

“Folks still wanted a one-size-fits-all solution like a GPU which they can use for both their training and inference. Now the emerging market has forced people to find differentiation and a general solution won’t help them accomplish that.”

Aside from the product itself, Heaps also touched on the elephant in the room — the name “Groq.”

Despite being created in 2016 with the name trademarked shortly after, Elon Musk’s own AI chatbot “Grok” only appeared on the scene in November 2023 and became widely recognized in the AI space.

Heaps said there have been “Elon fans” who have assumed they tried to “take the name” or that it was a sort of marketing strategy. However, once the company’s history became known he said, “then folks [got] a little quieter.”

“It was challenging a few months ago when their LLM was getting a lot of press, but right now I think people are taking notice of Groq, with a Q.”

[…] Public demos hit 500 tokens / s on Mixtral‑8×7B x.superex.com […]