AI computing in 2024: Navigating the surge in demand for generative AI

Doug Petkanis, the co-founder and CEO of Livepeer, shared his insights with Cointelegraph about the escalating demand for AI computing power in 2024 and how companies can manage these requirements.

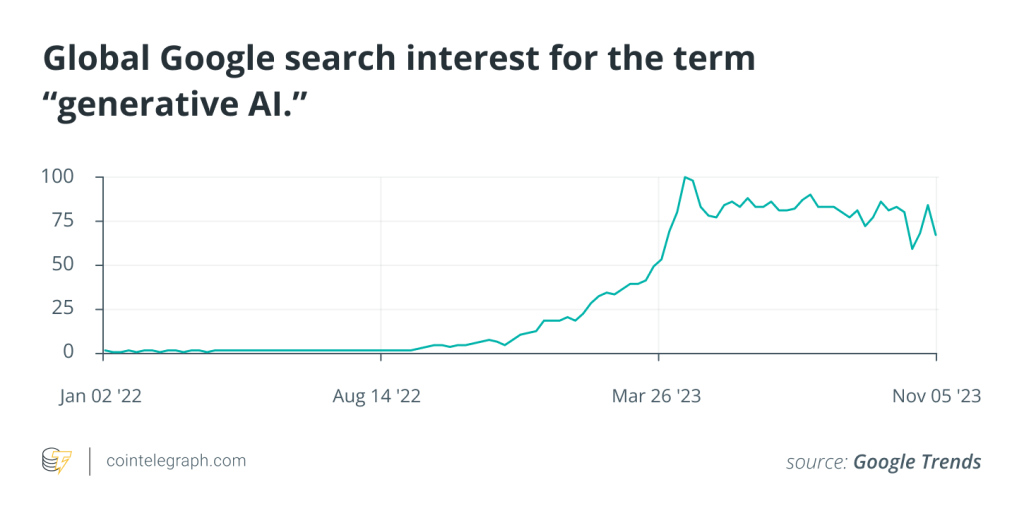

Over the last year the emergence of accessible generative artificial intelligence (AI) tools unleashed a frenzy of usage among consumers. From answering modest questions to using technology to perform excessive work tasks, technology is becoming more of a mainstay in everyday life.

The data backs this up, with the most popular AI platforms seeing a massive increase in traffic. OpenAI’s popular AI chatbot ChatGPT has around 180.5 million monthly users as of January 2024.

In this rapidly evolving landscape of generative AI, the demand for computing power to run the technology is reaching unprecedented levels, however. As businesses grapple with the complexities of managing this surge in computational requirements, industry experts are trying to find practical solutions.

In an interview with Cointelegraph, Doug Petkanis, the co-founder and CEO of video infrastructure network Livepeer, shared insights into the escalating demand for AI computing power in 2024 and shed light on strategies to manage the burgeoning requirements to run the technology.

Related: Michigan university to let AI participate in classes, choose major, earn degree

Understanding the significance of computing power

Computing power is the backbone of AI development and deployment, as it governs the speed and efficiency of AI models.

According to the Livepeer co-founder, the three stages in the Al lifecycle that typically need substantial computing power come during its training, fine-tuning, and inference – which is when the trained and tuned model produces outputs or predictions based on a set of inputs (also known as prompts).

The urgency for faster responses, however, collides with the economic reality of steep costs.

Petkanis explained that “more computing power generally correlates with faster responses. But there’s always a balance between user experience (speed) and the project’s economic viability (cost).”

Some estimates have OpenAI’s daily operating costs at $700,000. The incredible costs of computing are exacerbated by a shortage of GPUs that are suited for AI computing.

“Thankfully, the same GPUs that are already running in crypto-coordinated DePIN networks performing tasks like video transcoding and 3D rendering, are well-suited for AI. ”

“That’s why these crypto-networks have become such a critical component of the AI boom,” he said. Industry experts have already been pointing to this year’s emerging “power couple” of DePINs and AI.

A conscious consumer

While computing power is becoming a major concern to those developing the technology, Petkanis explained that, per usual, consumers are not in the loop.

“Most people don’t give much thought to infrastructure: where the power comes from, how the internet works, the cost or carbon footprint of a Google search,” he said. “They want utilities to work on demand, every time.”

He said the same goes for AI computing power. Most users don’t think about the energy, usage, or computing cost of their AI prompts they input into their favorite chatbot. “They care about speed and relevancy,” he said.

“Consumers won’t notice issues with computing power until the costs get passed on in the form of an increased quantity of ads, decreased speed/quality of responses or rising subscription costs.”

National and global implications

Addressing broader implications, Petkanis expressed concerns about the monopolization of scaled AI platforms.

Just as the dawn of the internet ushered in a group of companies that are now collectively known as “Big Tech” – Alphabet (Google), Amazon, Apple, Meta (Facebook and Instagram), and Microsoft – the beginning of the AI era sees the same mega companies racing for dominance.

“These handful of big tech companies already own large proprietary data sets farmed from customer data to train models, they have spent billions training these models…”

Since these companies own this full stack, it would essentially allow them to “arbitrarily insert” their own held biases into how the models perform on a given set of inputs. This would also theoretically allow them to censor what inputs and outputs can be provided to these models by users.

Thus, Petkanis stressed the importance of the open-source AI movement, which he said can be seen already with the aforementioned DePIN infrastructure that can help ensure accessibility and mitigate risks that are associated with centralization.

“Countries should be supporting these movements to ensure this wave of innovation is accessible to all and benefits a worldwide population.”

The AI boom is only just beginning. As it continues to evolve Petkanis and many others at the forefront of these developments already predict a “whole new set” of economic, environmental and social considerations.

… [Trackback]

[…] Read More on that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Read More on that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Read More to that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Read More Info here to that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Read More on that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Find More here on that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] There you can find 47077 more Information to that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Read More here on that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Find More here to that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Read More to that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Find More Information here to that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Read More here on that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Find More Information here on that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Find More to that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Find More Info here on that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Info on that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Info to that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Here you can find 30549 additional Info to that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Information on that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] There you can find 85323 additional Info on that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Read More Info here to that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Read More on that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Here you can find 29182 more Information on that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Read More to that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Info on that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Find More here to that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Read More Information here on that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Find More here to that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] There you will find 16430 additional Info on that Topic: x.superex.com/news/ai/2439/ […]

… [Trackback]

[…] Info on that Topic: x.superex.com/news/ai/2439/ […]