FTC’s rule update targets deepfake threats to consumer safety

The Federal Trade Commission’s updated rule empowers the agency to initiate federal court cases directly to compel scammers to return funds acquired through impersonating government or business entities.

In light of the increasing danger of deepfakes, the Federal Trade Commission (FTC) is seeking to update a current regulation prohibiting the impersonation of businesses or government agencies by artificial intelligence (AI) to protect all consumers.

The updated regulation, subject to final language and public feedback received by the FTC, could make it illegal for a generative artificial intelligence (GenAI) platform to offer products or services they know may be used to harm consumers through impersonation.

Emphasizing this, the FTC chair, Lina Khan, said in a press release:

“With voice cloning and other AI-driven scams on the rise, protecting Americans from impersonator fraud is more critical than ever. Our proposed expansions to the final impersonation rule would do just that, strengthening the FTC’s toolkit to address AI-enabled scams impersonating individuals.”

The FTC’s updated Government and Business Impersonation Rule empowers the agency to initiate federal court cases directly to compel scammers to return funds acquired through impersonating government or business entities.

2. Scams where fraudsters pose as the government are highly common. Last year Americans lost $2.7 billion to impersonator scams.

The rule @FTC just finalized will let us levy penalties on these scammers and get back money for those defrauded.https://t.co/8ON0G63ZjL

— Lina Khan (@linakhanFTC) February 15, 2024

The final rule on government and business impersonation will become effective 30 days after publication in the Federal Register. The public comment period for the Supplemental Notice of Proposed Rulemaking (SNPRM) will be open for 60 days following the date it is published in the Federal Register, with details on how to share comments.

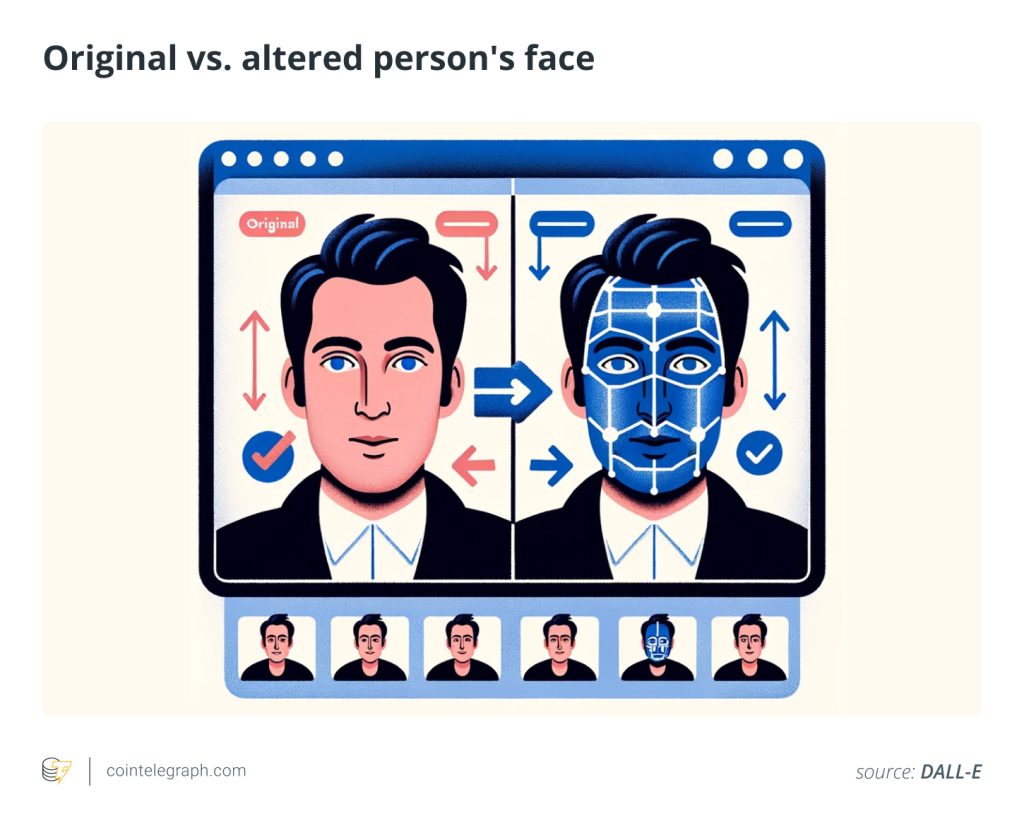

Deepfakes use AI to create manipulated videos by altering someone’s face or body. While no federal laws address the sharing or creation of deepfake images, some lawmakers are taking steps to address this issue.

Related: EU committee greenlights world’s first AI legislation

Celebrities and individuals who become deepfake victims can, in theory, use established legal options like copyright laws, rights related to their likeness, and various torts (such as invasion of privacy or intentional infliction of emotional distress) to seek justice. However, pursuing cases under these diverse laws can be lengthy and demanding.

On Jan. 31, the FCC banned AI-generated robocalls by reinterpreting a rule that forbids spam messages made by artificial or pre-recorded voices. This move came just after a phone campaign in New Hampshire that used a deepfake of President Biden to discourage people from voting. Without action from Congress, various states across the country have passed laws making deepfakes illegal.

… [Trackback]

[…] Find More here to that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Information to that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] There you will find 80476 additional Information to that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Read More on that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Info to that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Read More on to that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Read More on on that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] There you will find 29894 more Information on that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Read More Information here on that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Find More on on that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Information to that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Find More Information here to that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Info to that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] There you can find 43700 additional Information on that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Here you will find 58309 more Information to that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Read More Info here to that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Read More on that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Here you can find 99454 more Info on that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Here you will find 55807 more Information on that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Read More to that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Find More to that Topic: x.superex.com/news/ai/4496/ […]

… [Trackback]

[…] Find More to that Topic: x.superex.com/news/ai/4496/ […]